LLMWise vs Prompt Builder

Side-by-side comparison to help you choose the right product.

LLMWise offers a single API for seamless access to top AI models, optimizing costs with pay-per-use flexibility.

Last updated: February 26, 2026

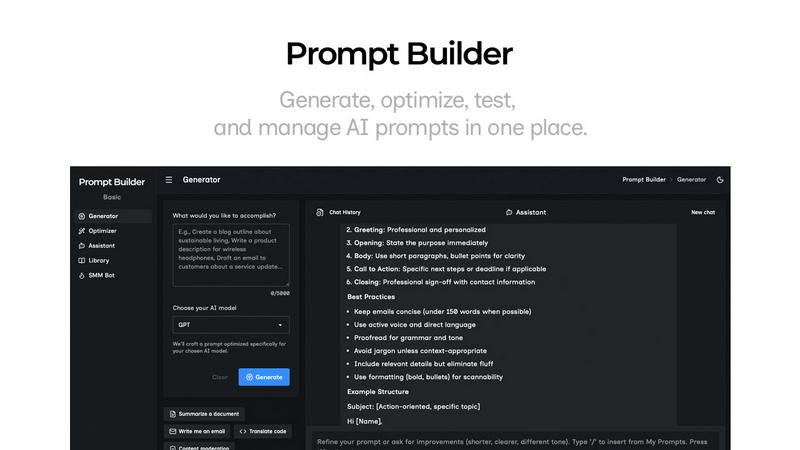

Prompt Builder

Craft, refine, and reuse perfect AI prompts in seconds to get consistent results across all models.

Last updated: April 13, 2026

Visual Comparison

LLMWise

Prompt Builder

Feature Comparison

LLMWise

Smart Routing

LLMWise's smart routing feature automatically directs prompts to the most capable LLM. This means that coding inquiries are sent to GPT, while tasks involving creative writing are routed to Claude. By optimizing model selection based on task type, users can achieve faster and more accurate results, significantly enhancing productivity.

Compare & Blend

The compare mode enables users to run prompts across multiple models side-by-side. This feature allows developers to see which model performs best for specific tasks. Additionally, the blend functionality combines the best outputs into a single, cohesive response, ensuring that users receive the highest quality answers possible.

Always Resilient

With built-in circuit-breaker failover, LLMWise guarantees uninterrupted service even if a specific model goes down. If a provider experiences downtime, requests will automatically reroute to backup models, ensuring that applications remain operational and reliable at all times.

Test & Optimize

LLMWise includes robust benchmarking suites, allowing users to conduct batch tests and implement optimization policies for speed, cost, or reliability. Automated regression checks ensure that the performance of the models remains consistent over time, enabling developers to focus on building instead of troubleshooting.

Prompt Builder

Prompt Generator

The Prompt Generator is your starting point for rapid, high-quality prompt creation. Simply describe your task or idea in everyday language and select your target AI model—such as GPT-4, Claude 3, or Gemini Pro. The Generator then crafts a professional-grade, model-tuned prompt draft that aligns with the specific structural preferences and capabilities of that model. This foundational draft is designed for immediate refinement, kicking off the iterative cycle of improvement that defines the Prompt Builder experience and saving you from starting with a blank page.

Prompt Assistant & Chat Workspace

This integrated chat environment allows you to test, iterate, and perfect your prompts without ever leaving the platform. Select from various assistant models like Grok, DeepSeek, or Gemini to run your generated or optimized prompts. Engage in follow-up conversations to refine the output, and use the chat history to track your iterative progress. You can instantly insert prompts from your Library or the Generator for testing, creating a seamless loop of execution and enhancement that keeps all your experimentation organized and accessible.

Prompt Optimizer

The Optimizer elevates your existing prompts through structured refinement. Paste any prompt—whether from an old chat thread or your Library—and the Optimizer analyzes it for improvements in clarity, constraints, output format, and examples. It provides a clearer, more effective version in seconds. Each optimization is auto-saved in your history, allowing you to compare versions, pin your favorites, and with one click, run the new prompt in the Assistant to immediately test its improved performance, continuing the cycle of refinement.

Prompt Library & Community Templates

Your central hub for storing, organizing, and discovering prompts. Save your best, pinned prompt versions from the Generator or Optimizer into your personal Library for easy reuse across projects. Search and filter by category and model. Furthermore, explore a growing collection of Community Prompts and templates, allowing you to leverage proven structures from other users, adapt them to your needs, and add them to your own collection, fostering a continuous cycle of shared learning and improvement.

Use Cases

LLMWise

Software Development

Developers can utilize LLMWise to streamline coding tasks by routing requests to the most suitable models. For instance, using GPT for code generation while leveraging Claude for documentation can enhance the overall development workflow.

Creative Writing

Content creators can benefit from LLMWise's blend feature, which allows them to run prompts through different creative writing models. By comparing and synthesizing outputs, they can produce high-quality narratives or marketing content that resonates with their audiences.

Translation Services

Translators can take advantage of LLMWise by selecting the best models for language translation tasks. The platform's smart routing ensures that requests are handled by the most effective LLM for each specific language pair, leading to more accurate translations.

Quality Assurance

Quality assurance teams can use the compare mode to evaluate the outputs of various models against predetermined benchmarks. This allows them to identify strengths and weaknesses, ensuring that the best model is consistently used for production tasks, resulting in higher quality deliverables.

Prompt Builder

Content Creation & Marketing

Content teams and marketers can break free from creative block and inconsistency. Use the SMM Bot to generate platform-ready social posts for LinkedIn, X, or TikTok from a single brief, then refine the tone and hooks in the chat workspace. Save high-performing marketing copy, blog outlines, or email sequences to your Library, creating a reusable asset bank that evolves and improves with each campaign, ensuring brand voice consistency and saving countless hours.

Technical Development & Coding

Developers and engineers can streamline their AI-assisted coding workflow. Generate precise prompts for code generation, debugging, or documentation tailored for models like Claude or GPT. Test different prompt structures in the Assistant to get optimal code snippets, then save the most effective technical prompts to your Library. This creates a personal knowledge base of reliable prompts for common tasks, turning sporadic assistance into a systematic, repeatable development tool.

Research & Analysis

Researchers, analysts, and students can accelerate their information synthesis. Craft detailed prompts for summarizing complex papers, extracting data insights, or comparing concepts across multiple sources. The model-specific tuning ensures higher-quality, more relevant outputs from the start. The iterative chat allows for deep dives with follow-up questions, and all research prompts and their refined versions are saved, making it easy to replicate successful analysis frameworks for future projects.

Business Process Automation

Business professionals and entrepreneurs can systemize repetitive AI tasks. Create and optimize prompts for generating reports, drafting standard communications, analyzing customer feedback, or brainstorming business strategies. By saving these operational prompts in the Library, you build an internal "playbook" that any team member can use, ensuring processes are efficient, scalable, and continuously improved upon, directly translating to increased productivity and standardized output quality.

Overview

About LLMWise

LLMWise is a cutting-edge API solution that streamlines access to multiple large language models (LLMs), including major providers such as OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. Designed specifically for developers, LLMWise eliminates the complexity of managing diverse AI services by providing a single interface. Its intelligent routing feature ensures that every prompt is sent to the most suitable model, whether it is GPT for coding, Claude for creative writing, or Gemini for translation tasks. The platform enhances productivity and efficiency by allowing users to compare outputs across different models, blend responses for optimal results, and utilize advanced failover mechanisms to maintain application stability. With LLMWise, you can optimize your AI tasks without the burden of multiple subscriptions and API keys, making it the ideal choice for teams seeking the best AI solutions without unnecessary expenses or complexity.

About Prompt Builder

Prompt Builder is the definitive prompt engineering workspace designed to transform how individuals and teams interact with AI. It eliminates the frustrating, time-consuming cycle of manually crafting and rewriting prompts for different models. Instead, it provides a streamlined, iterative environment where you can describe a task in plain English, generate a model-optimized draft in seconds, and then refine it through continuous chat-based improvement. This cyclical process of generate, test, refine, and save ensures your prompts evolve from rough ideas to precision tools. Built for content creators, marketers, developers, and anyone who relies on consistent AI outputs, Prompt Builder's core value is turning hours of prompt guesswork into a reliable, repeatable workflow. It consolidates your entire prompt lifecycle—from initial creation with its intelligent Generator and Optimizer, to testing in the built-in Assistant with multiple AI models, to saving and reusing perfected versions in your personal or community Library—into one powerful, unified platform.

Frequently Asked Questions

LLMWise FAQ

What models can I access with LLMWise?

LLMWise provides access to 62 models from 20 different providers, including OpenAI's GPT, Anthropic's Claude, Google's Gemini, and many others. This extensive library allows users to choose the best model for each task.

Is there a subscription fee for LLMWise?

No, LLMWise operates on a pay-as-you-go model. Users can start for free with trial credits and only pay for what they use, making it a cost-effective solution without the burden of monthly subscriptions.

How does LLMWise ensure reliability?

LLMWise includes a circuit-breaker failover feature that automatically reroutes requests to backup models if a primary model goes down. This ensures that your applications remain functional and reliable at all times.

Can I use my existing API keys with LLMWise?

Yes, LLMWise supports "Bring Your Own Key" (BYOK) functionality. This means you can plug in your existing API keys from various providers, allowing you to maintain control over costs while benefiting from LLMWise's features.

Prompt Builder FAQ

Which AI models does Prompt Builder support?

Prompt Builder is designed as a universal prompt workspace. It supports prompt generation and optimization for a wide range of models including OpenAI's GPT series, Anthropic's Claude, Google's Gemini, Meta's Llama, Mistral AI, DeepSeek, xAI's Grok, Perplexity, and Cohere. The built-in Prompt Assistant allows you to run and test prompts directly with many of these models, including Grok, Gemini, GPT, and DeepSeek, without switching applications.

How does the "model-optimized" prompt generation work?

When you use the Prompt Generator, you first select your target AI model (e.g., Claude 3). The system then tailors the structure, constraints, and suggested output format of the generated prompt to align with the known best practices, strengths, and expected input styles of that specific model. This means you get a first draft that is more likely to produce a high-quality, relevant response on the first try, reducing the need for extensive rewrites and token-wasting retries.

What is included in the free plan?

The free plan offers a robust starting point to experience the core Prompt Builder cycle. It includes 25 assistant requests per month, allowing you to generate, test, and refine prompts within the platform. You get access to the Prompt Generator, Optimizer, and Library to save your work. This enables you to fully test the iterative workflow of creating model-tuned prompts, improving them, and building a personal collection—all without requiring a credit card.

Can I collaborate with my team on prompts?

While the current focus is on the individual user's iterative workflow and personal Library, the ability to save, organize, and reuse prompts creates a foundation for team collaboration. By building a library of optimized, proven prompts for common business tasks, team members can share these resources externally. The platform's structure inherently supports standardizing best practices across a group, ensuring everyone uses the most effective prompts and contributes to their continuous refinement.

Alternatives

LLMWise Alternatives

LLMWise is a cutting-edge API that streamlines access to major language models, including GPT, Claude, Gemini, and others. It belongs to the AI Assistants category, aiming to simplify the process of managing multiple AI providers by intelligently routing prompts to the most suitable model for each task. Users often seek alternatives due to concerns about pricing structures, specific feature sets, or the compatibility of platforms with their unique requirements. When searching for an alternative, consider the flexibility of pricing, the range of supported models, and the ease of integration with existing systems. Look for options that offer robust performance metrics, resilience features, and an intuitive user interface. These factors can significantly enhance the efficiency and effectiveness of your AI-driven applications.

Prompt Builder Alternatives

Prompt Builder is a comprehensive AI prompt engineering workspace. It belongs to the category of AI assistants designed to streamline the process of creating, testing, and managing prompts for various large language models. Users can transform a simple idea into a polished, effective prompt in seconds, all within a single, organized interface. Users often explore alternatives for several practical reasons. These can include budget constraints, the need for specific integrations with other platforms, or a desire for different feature sets like advanced collaboration tools or specialized testing environments. The search for the right tool is a natural part of finding the optimal workflow fit. When evaluating other options, consider your core needs. Look for a tool that supports the AI models you use most, offers a robust method for testing and iterating on prompts, and provides a way to organize your work efficiently. The goal is to find a solution that turns the iterative process of prompt refinement into a smooth, continuous cycle of improvement.