aiga_ vs OpenMark AI

Side-by-side comparison to help you choose the right product.

AI art, evolving stories, solo or social play.

OpenMark AI continuously benchmarks over 100 LLMs on your actual task to find the best model for cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

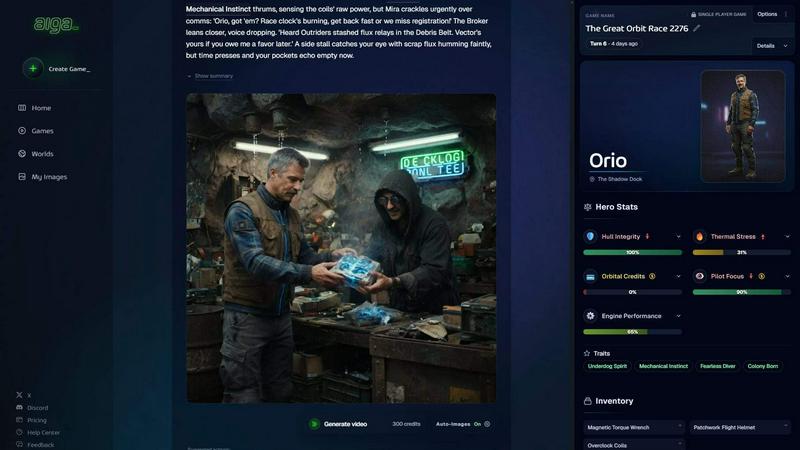

aiga_

OpenMark AI

Overview

About aiga_

aiga_ is a living story engine that transforms interactive fiction into a visual reality. Unlike static text adventures, aiga_ uses advanced AI to generate stunning, style-consistent artwork and evolving narratives in real-time. Every choice you make carries weight, reshaping the world through a GameBook system that tracks stats, inventory, and complex character relationships. You can choose from over 15 art styles or upload a personal reference image to ensure your hero remains visually consistent across every scene.

The platform features dynamic NPCs who possess memory and unique motivations, allowing you to chat freely with them or listen as AI voice narration brings the world to life. Designed for seamless community play, aiga_ offers full cross-progression across Web, Discord, Telegram, and X. Whether you are a solo storyteller, a TTRPG group, or a brand engaging an audience, aiga_ provides a limitless, multilingual platform where no two playthroughs are ever the same.

About OpenMark AI

OpenMark AI is a powerful web application designed to end the guesswork in selecting large language models (LLMs) for production applications. It provides a comprehensive, task-level benchmarking platform where developers and product teams can describe their specific use case in plain language and run the same prompts against a vast catalog of over 100 models in a single, unified session. The core value proposition is delivering actionable, real-world data for pre-deployment decisions. Instead of relying on marketing claims or single, lucky outputs, OpenMark AI shows you performance variance, scored quality, real API latency, and actual cost per request across repeat runs. This cyclical, iterative approach to testing ensures you can continuously improve your model selection based on hard evidence, not hunches. Built for efficiency, it uses a hosted credit system, eliminating the need to manage and configure separate API keys for every provider like OpenAI, Anthropic, or Google. The platform is designed for those who prioritize cost efficiency—finding the optimal balance of quality relative to price—and need confidence that a model will deliver consistent, stable results every time it's called in a live feature.